High-performance creative is a rare thing for social advertising. In our experience, after spending over $3 billion dollars driving UA across Facebook and Google, usually only one out of 17 to 20 ads can beat the “best performing control” (the top ad). If a piece of creative doesn’t outperform the best control, you lose money running it. Losers are killed quickly, and winners are scaled profitably.

The reality is, a vast majority of ads fail. The chart below shows the results of over 17,100 different ads. Spend is distributed based on ad performance. As you can see, out of those 17,000 ads, only a handful drove a majority of the profitable spend.

The high failure rate of most creative shapes creative strategy, budgets, and ad testing methodology. If you can’t test ads quickly and affordably, your campaign’s financial performance is likely to suffer from a lot of non-converting spend. But testing alone isn’t enough. You also must generate enough original creative concepts to fuel testing and uncover winners. Over the years, we’ve found that 16 out of 20 ads fail (5% to 17% success rate), you don’t just need one new creative: You need 20 new original ideas or more to sustain performance and scale!

And you need all that new creative fast because creative fatigues quickly. You may need 20 new creative concepts every month, or possibly even every week depending on your ad spend and how your title monetizes (IAA or IAP). The more spend you run through your account, the more likely it is that your ad’s performance will decline.

Why is the Control Video so Hard to Beat?

Creative Testing: Our Unique Way

Let us set the stage for how and why we’ve been doing creative testing in a unique way. We test a lot of creative. In fact, we produce and test more than 100,000 videos and images yearly for our clients, and we’ve performed over 10,000 A/B and multivariate tests on Facebook and Google.

We focus on these verticals: gaming, e-commerce, entertainment, automotive, D2C, financial services, and lead generation. When we test, our goal is to compare new concepts vs. the winning video (control) to see if the challenger can outperform the champion. Why? If you can’t outperform the best ad in a portfolio, you will lose money running the second or third-place ads.

While we have not tested our process beyond the aforementioned verticals, we have managed over $3 billion in paid social ad spend and want to share what we’ve learned. Our testing process has been architected to save both time and money by killing losing creatives quickly and to significantly reduce non-converting spend. Our process will generate both false negatives and false positives. We typically allow our tests to run between 2-7 days to provide enough time to gather data without requiring the capital and time required to reach statistical significance (StatSig). We always run our tests using our software AdRules via the Facebook API. Our insights are specific to the above scenarios, not a representation of how all testing on Facebook’s platform operates. In cases, it is valuable to retain learning without obstructing ad delivery.

To be clear, our process is not the Facebook best practice of running a split test and allowing the algorithm to reach statistical significance (StatSig) which then moves the ad set out of the learning phase and into the optimized phase. The insights we’ve drawn are specific to these scenarios we outline here and are not a representation of how all testing on Facebook’s platform operates. In cases, it is valuable to have old creative retain learning to seamlessly A/B test without obstruct- ing ad delivery.

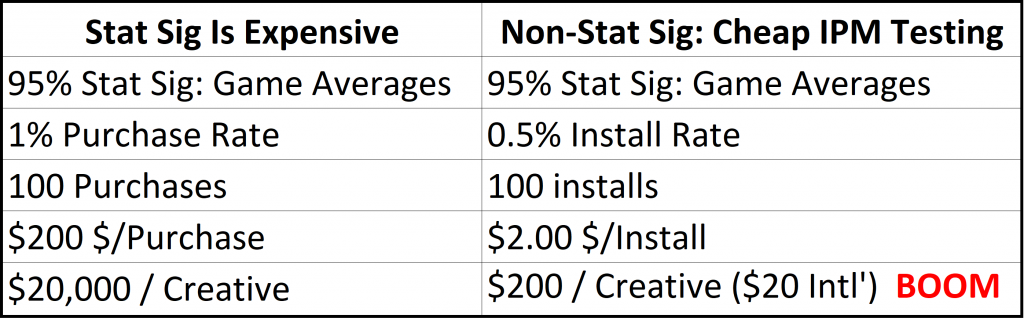

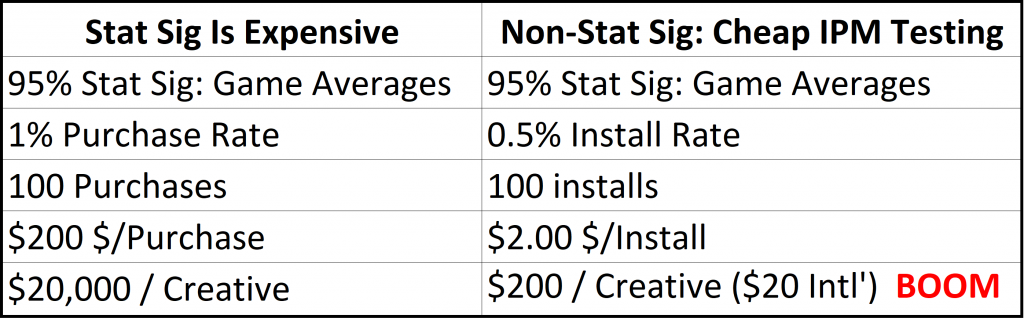

Creative Testing: Statistical Significance vs Cost-Effective

Let’s take a closer look at the cost aspect of creative testing.

In classic testing, you need a 95% confidence rate to declare a winner, exit the learning phase, and reach StatSig. That’s nice to have, but getting a 95% confidence rate for in-app purchases may end up costing you $20,000 per creative variation.

Why so expensive?

As an example, to reach a 95% confidence level, you’ll need about 100 purchases. With a 1% purchase rate (which is typical for gaming apps), and a $200 cost per purchase, you’ll end up spending $20,000 for each variation in order to accrue enough data for that 95% confidence rate. There aren’t a lot of advertisers who can afford to spend $20,000 per variation, especially if 95% of new creative fails to beat the control.

So, what to do?

What we do is move the conversion event we’re targeting up in the sales funnel. For mobile apps, instead of optimizing for purchases, we optimize for impression per install (IPM). For web- sites, we’d optimize for an impression to top-funnel conversion rate. Again, this is not a Facebook recommended best practice, this is our own voodoo magic/secret sauce that we’re brewing.

IPM Testing Is Cost-Effective

A concern with our process is that ads with high CTRs and high conversion rates for top-funnel events may not be true winners for down-funnel conversions and ROI / ROAS. But while there is a risk of identifying false positives and negatives with this method, we’d rather take that risk than spend the time and expense of optimizing for StatSig bottom-funnel metrics.

To us, it is more efficient to optimize for IPMs vs. purchases. Most importantly, it means you can run tests for less money per variation because you are optimizing towards installs vs purchases. For many advertisers, that alone can make more testing financially viable. $200 testing cost per variation versus $20,000 testing cost per variation can mean the difference between being able to do a couple of tests versus having an ongoing, robust testing program.

We don’t just test a lot of new creative ideas. We also test our creative testing methodology. That might sound a little “meta,” but it’s essential for us to validate and challenge our assumptions and results. When we choose a winning ad out of a pack of competing ads, we’d like to know that we’ve made a good decision.

Because the outcomes of our tests have consequences – sometimes big consequences – we test our testing process. We question our testing methodology and the assumptions that shape it. When we kill most of our new concepts because they didn’t test well, our entire team reacts by killing the losing concepts and pivoting the creative strategy based on those results to try other ideas.

Control Video: How We’ve Been Testing Creative Until Now

When testing creative we typically would test three to six videos along with a control video using Facebook’s split test feature. We would show these ads to broad or 5-10% LALs (Lookalike) audiences, and restrict distribution to the Facebook newsfeed only, Android only and we’d use mobile app install bidding (MAI) to get about 100-250 installs.

If one of those new “challenger” ads beat the control video’s IPM or came within 10%-15% of its performance, we would launch those potential new winning videos into the ad sets with the control video and let them fight it out to generate ROAS.

We’ve seen hints of what we’re about to describe across numerous ad accounts and have confirmed with other 7-figure spending advertisers that they have seen the same thing. But for purposes of explanation, let’s focus on one client of ours and how their ads performed in creative tests.

In November and December 2019, we produced +60 new video concepts for this client. All of them failed to beat the control video’s IPM. This struck us as odd, and it was statistically impossible. We expected to generate a new winner 5% of the time or 1 out of 20 videos – so 3 winners. Since we felt confident in our creative ideas, we decided to look deeper into our testing methods.

The traditional testing methodology includes the idea of testing a testing system or an A/A test. A/A tests are like A/B tests, but instead of testing multiple creatives, you test the same creative in each “slot” of the test.

If your testing system/platform is working as expected, all “variations”, should produce similar results assuming you get close to statistical significance. If your A/A test results are very different, and the testing platform/methodology concludes that one variation or another significantly outperforms or underperforms compared to the other variations, there could be an issue with the testing method or quantity of data gathered.

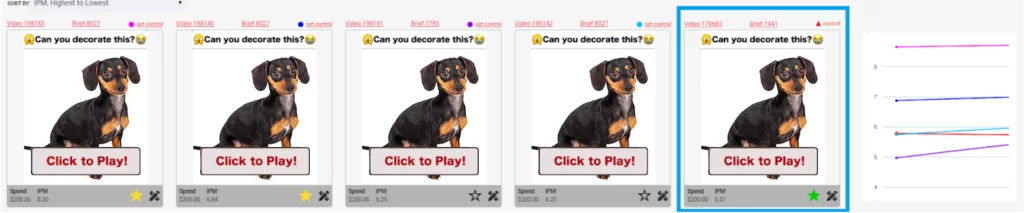

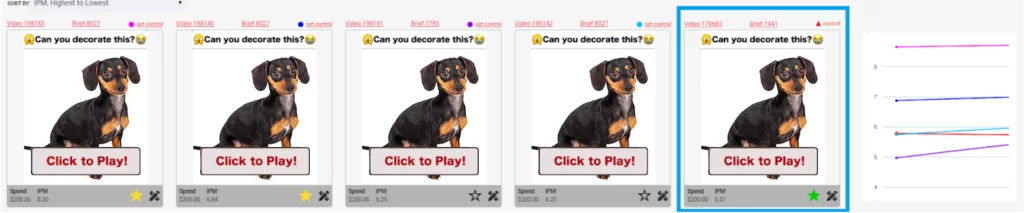

First A/A test of video creative: Give Control a Performance Boost

Here’s how we set up an A/A test to validate our non-standard approach to Facebook testing. The purpose of this test was to understand if Facebook maintains a creative history for the control and thus gives the control a performance boost making it very difficult to beat – if you don’t allow it to exit the learning phase and reach statistical relevance.

We copied the control video four times and added one black pixel in different locations in each of the new “variations.” This allowed us to run what would look like the same video to humans but would be different videos in the eyes of the testing platform. The goal was to get Facebook to assign new hash IDs for each cloned video and then test them all together and observe their IPMs.

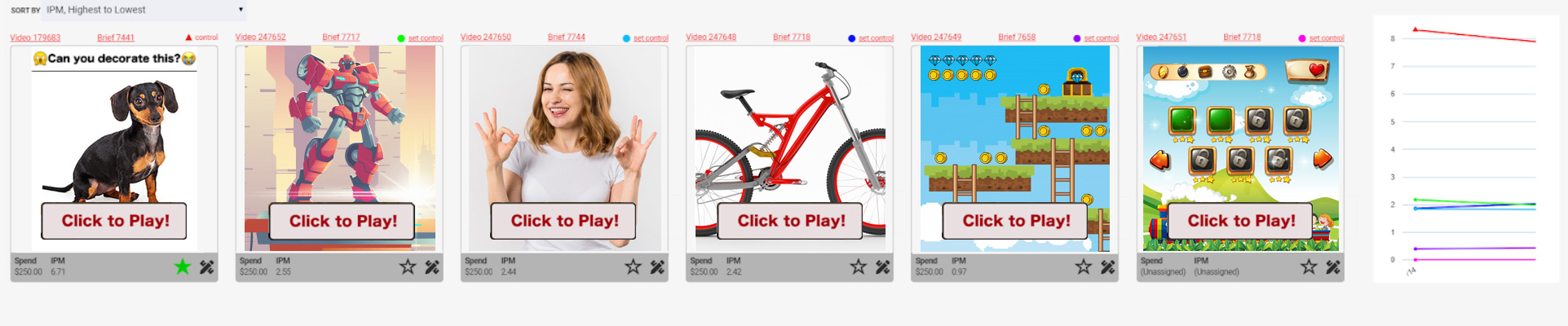

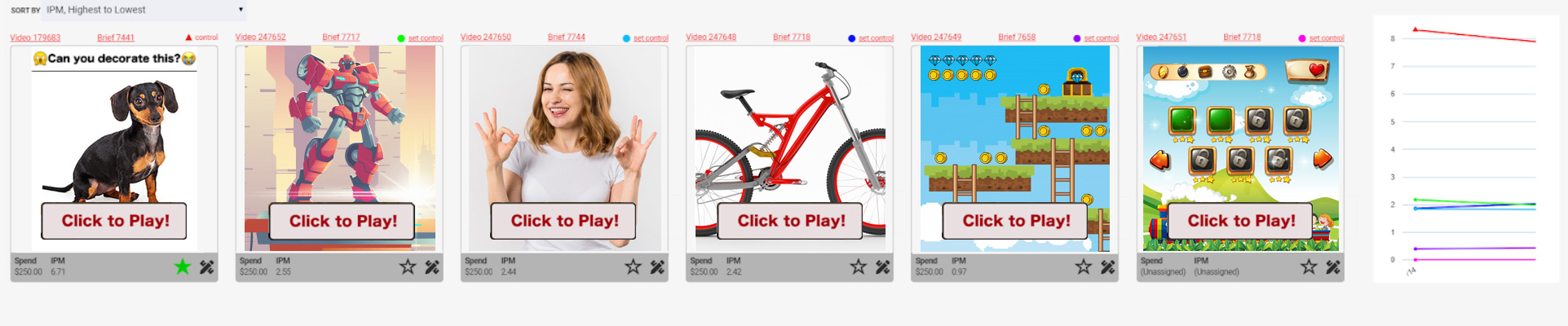

These are the ads we ran… except we didn’t run the hotdog dog; I’ve replaced the actual ads with cute doges to avoid disclosing the advertiser’s identity. IPMs for each ad in the far right of the image.

Things to note here:

The far-right ad (in the blue square) is the control.

All the other ads are clones of the control with one black pixel added.

The far-left ad/clone outperformed the control by 149%. As described earlier, a difference like that shouldn’t happen. If the platform was truly variation agnostic, BUT – to save money, we did not follow best practices to allow the ad set(s) to exit the learning phase.

We ran this test for only 100 installs. Which is, our standard operating procedure for creative testing.

Once we completed our first test to 100 installs, we paused the campaign to analyze the results. Then we turned the campaign back on to scale up to 500 installs in an effort to get closer to statistical significance. We wanted to see if more data would result in IPM normalization (in other words, if the test results would settle back down to more even performance across the variations). However, the results of the second test remained the same. Note: the ad set(s) did not exit the learning phase and we did not follow Facebook’s best practice.

The results of this first test, while not statistically significant, were surprisingly enough to merit additional tests. So we tested on!

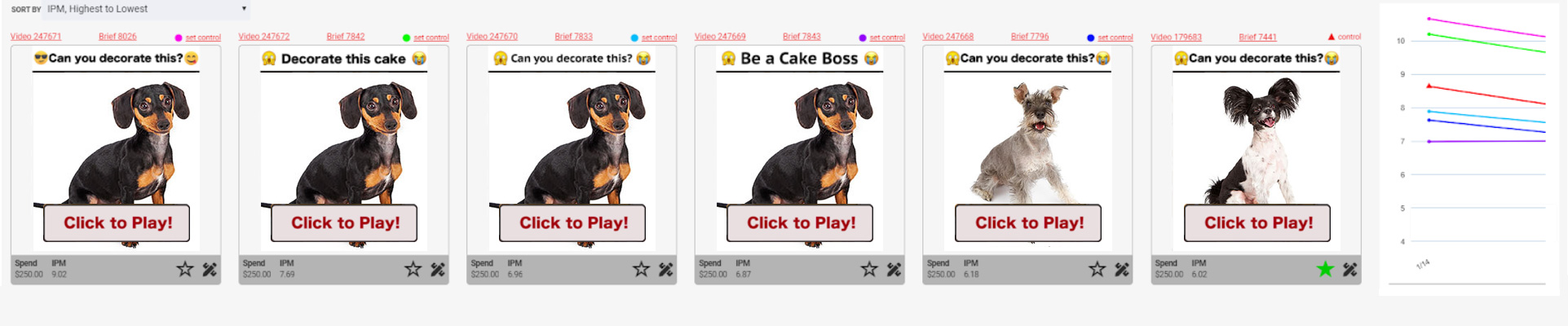

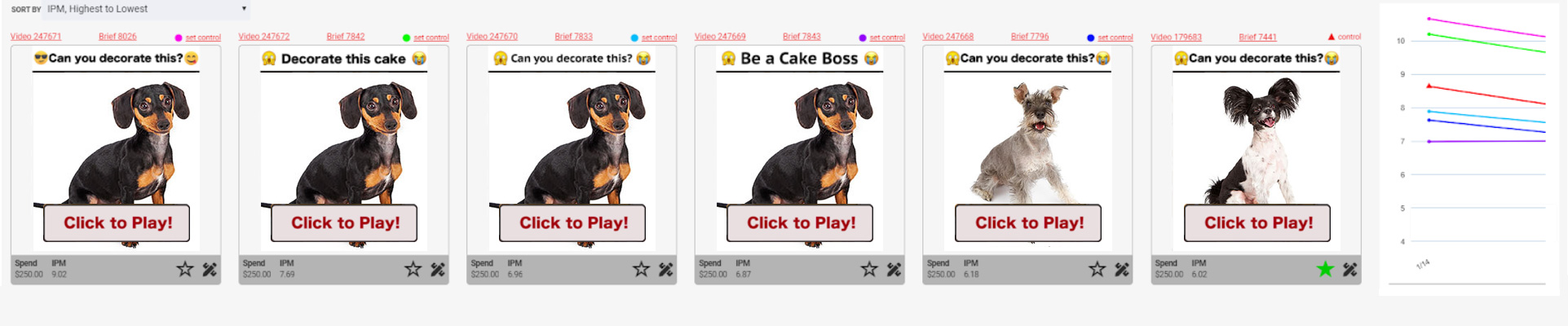

Second A/A test of video creative: Give controls different headers

For our second test, we ran the six videos shown below. Four of them were controls with different headers; two of them were new concepts that were very similar to the control. Again, we didn’t run the hotdog dogs; they’ve been inserted to protect the advertiser’s identity and to offer you cuteness!

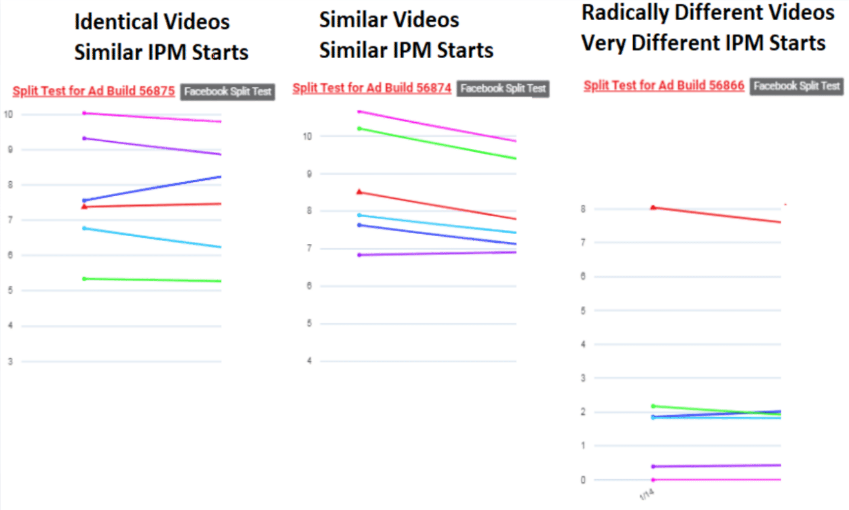

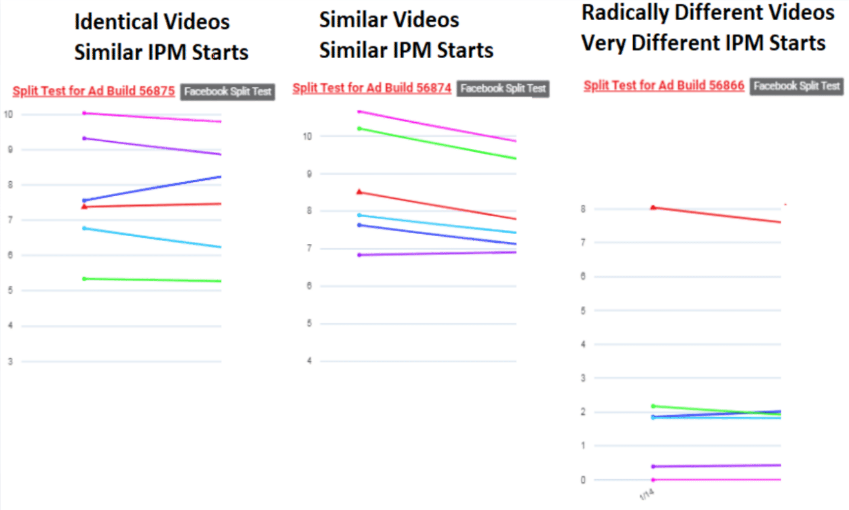

The IPMs for all ads ranged between 7-11 – even the new ads that did not share a thumbnail with the control. IPMs for each ad in the far right of the image.

Third A/A test of video creative: One control and similar variations

Next, we tested six videos: one control and five visually similar variations to the control but one very different to a human. IPMs ranged between 5-10. IPMs for each ad in the far right of the image.

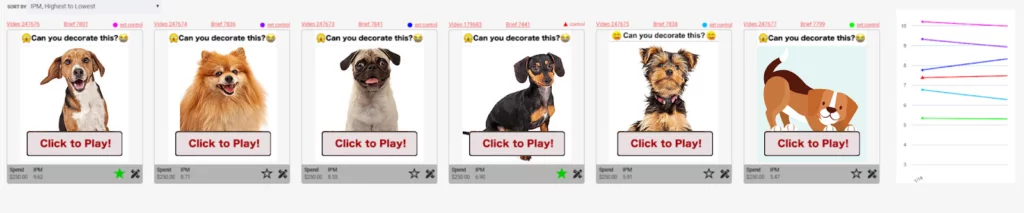

Fourth A/A test of video creative: One control and different ideas

This was when we had our “ah-ha!” moment. We tested six very different video concepts: the one control video and five brand new ideas, all of which were visually very different from the control video and did not share the same thumbnail.

The control’s IPM was consistent in the 8-9 range, but the IPMs for the new visual concepts ranged between 0-2. IPMs for each ad in the far right of the image.

Here are our impressions from the above tests:

Facebook’s split-tests maintain creative history for the control video. This gives the control advantage with our non-statistically relevant, non-standard best practice of IPM testing.

We are unclear if Facebook can group variations with a similar look and feel to the control. If it can, similar-looking ads could also start with a higher IPM based on influence from the control. Or perhaps similar thumbnails influence non-statistically relevant IPM.

Creative concepts that are visually very different from the control appear to not share a creative history. IPMs for these variations are independent of the control.

It appears that new, “out of the box” visual concepts vs the control may require more impressions to quantify their performance.

Our IPM testing methodology appears to be valid if we do NOT use a control video as the benchmark for winning.

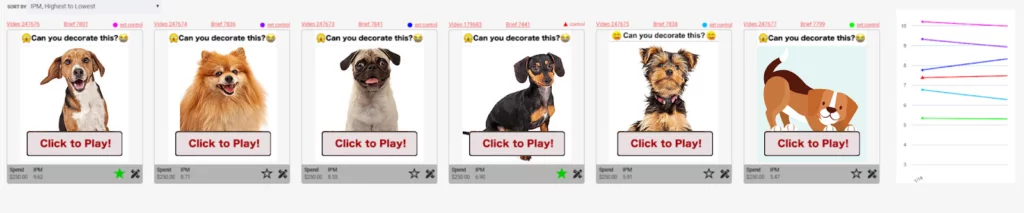

IMP Testing Summary

Here are the line graphs from the second, third, and fourth tests.

And here’s what we think they mean:

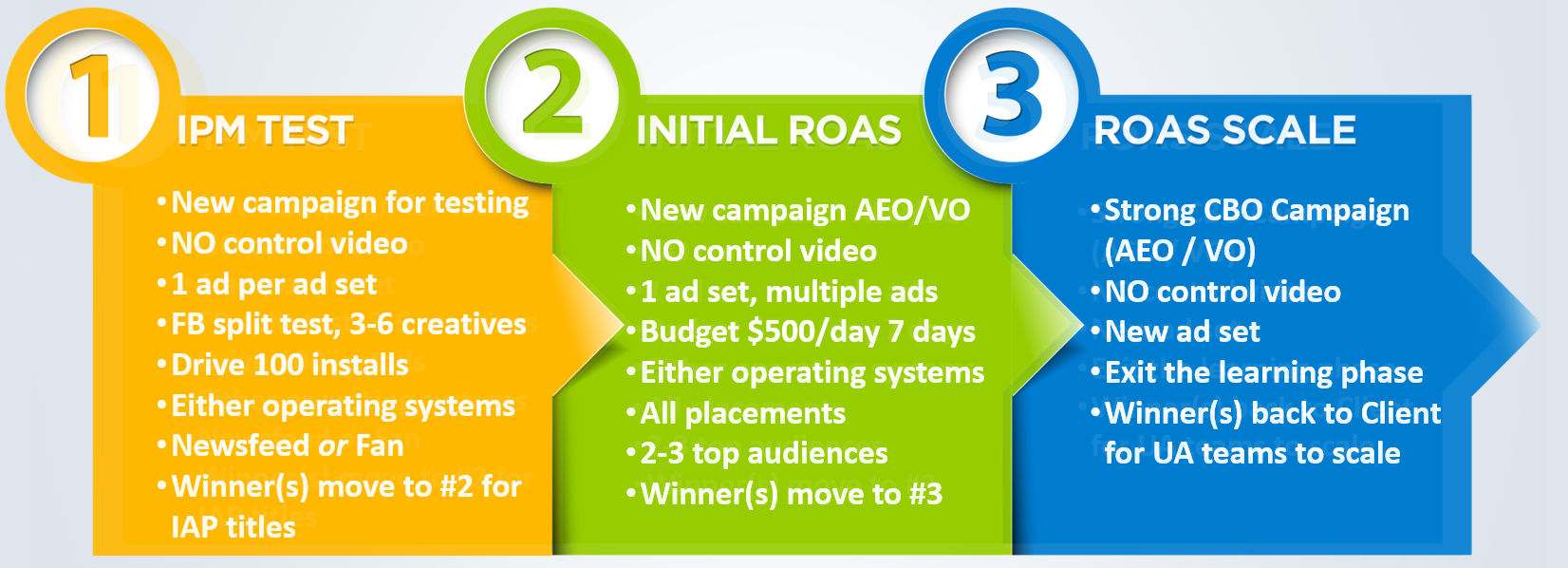

Creative Testing 2.0 Recommendations:

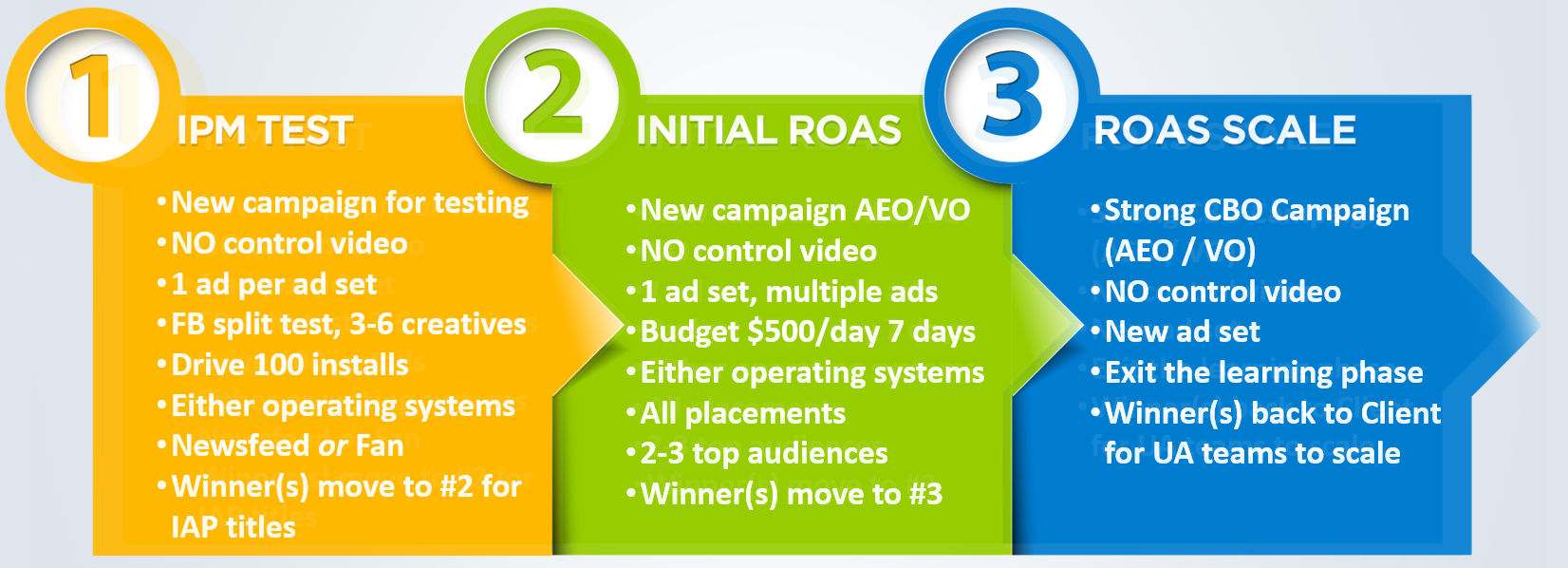

Given the above results, those of us testing using IPM have an opportunity to re-test IPM winners that exclude the control video to determine if we’ve been killing potential winners. As such, we recommend the following three-phase testing plan.

Our 3-Step Creative Testing Process

Phase 1: IPM Test (No Control Video)

- Create a new split test campaign using 3~6 new creatives (no control).

- Setup campaign structure for basic App Install (No event optimization or value optimization)

- Spend an equal amount on each creative. Ex: One ad per ad set.

- Budget for at least 100 installs per creative

- $200~$400 spend per ad is recommended (based on a CPI of $2-$4) if T1 English-speaking country

- $20~$40 spend per ad/adset testing in India (based on $0.20-$0.40 CPI)

- US Phase 1 testing.

- 10-15% LAL with a seed audience similar to past 90-day installers, or past 90 day payers.

- Non-US Phase 1 testing.

- Use broad targeting & English speakers only

- If not available in India, try other English-speaking countries with lower CPMs than U.S. and similar results. Ex: ZA, CA, IE, AU, PH, etc.

- Use the OS (iOS or Android) you intend to scale in production

- Use one body text

- Headline is optional

- FB Newsfeed or Facebook Audience Networking placement only (not both and not auto placements)

- Be sure the winner has 100+ installs (50 installs acceptable in high CPI scenarios)

- 100 installs: 70% confidence with 5% margin of error

- 160 installs: 80% confidence with 5% margin of error

- 270 installs: 90% confidence with 5% margin of error

- IAP Titles: kill losers, top 1~3 winners go to phase 2

- IAA Titles: kill losers, allow top 1~3 “possible winners” to exit the learning phase and then put into “the Control’s” campaign

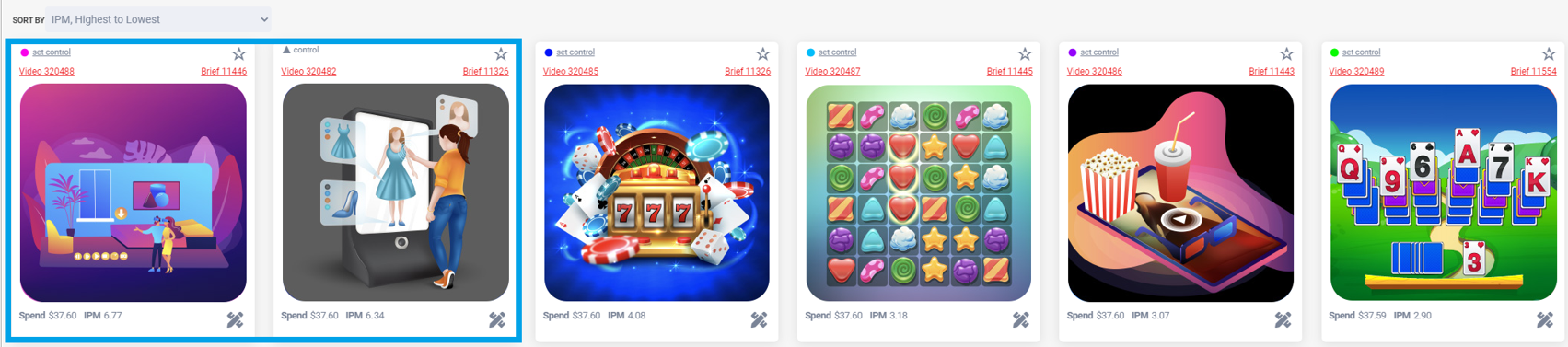

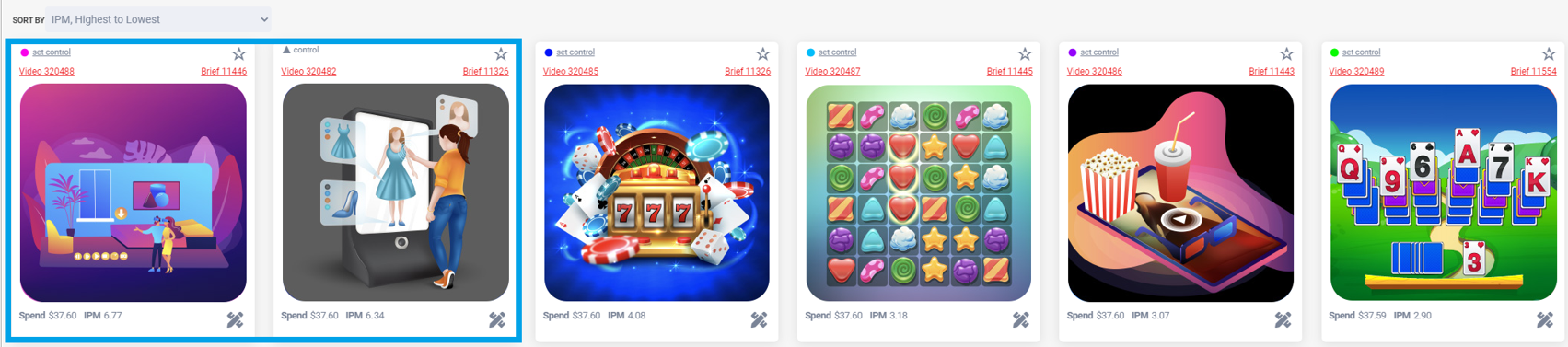

Which Creatives Move From Phase 1 > Phase 2?

- How To Pick A Phase 1 IPM Winner

- IPMs may range broadly or be clumped together

- Goal: kill obvious losers and test remaining ads in phase 2

- Ads (blue) have IPMs 6.77 & 6.34, move to phase 2

- If all ads are very close (e.g. within 5%), increase the budget

- IAA (in-app ads titles) you may need more LTV data before scaling

Phase 2: Initial ROAS (No Control Video)

- Create a new campaign with AEO or VO optimization

- Place all creatives into a single adset (Multi Ads Per Adset)

- Use IPM winner(s) from Phase 1 (you can combine winners from multiple Phase 1 tests into a single Phase 2 test)

- OS – Android or iOS. 5-10% LALs from top seeds (purchases, frequent users + purchase) + Auto Placements

- Testing can be done at a lower cost if you wish to run this campaign in other countries where ROAS is similar or higher but CPMs are much lower compared to the US – ie. South Africa, Ireland, Canada, etc.

- Lifetime budget $3,500-$4,900 or daily budgets of $500-$750 over the course of 4-6 days (depending on your $/purchase).

- WARNING! Skipping this step is highly likely to result in one of the following scenarios:

- Challenger immediately kills the champion/control but hasn’t achieved enough statistical relevance or exited the learning phase and therefore the sustained ROAS/KPI may not be sustained.

- Champion/control video has a lot more statistical history and relevance and most likely has exited the learning phases and may immediately kill the challenger before it has a chance to get enough data to properly fight for ROAS.

Phase 3: ROAS Scale (No Control Video)

- Choose winner(s) from Phase 2 with good/decent ROAS

- You’ve proven the ad has great IPM and “can monetize”

- To win this phase, it must hit KPIs (D7 ROAS, etc.)

- Create a copy of an existing ad set

- Delete old ads and replace them with your Phase 2 winner(s)

- Allows new ads to spend in a competitive environment

- Then, create a new ad set, roll it out towards target audiences with solid ROAS / KPIs

- CBO controls budgets between ad sets with control creatives and ad sets with new creative winners.

- Intervene with adset min/max spend control only if new creatives don’t receive spend from CBO.

- Require challenger to exit the learning phase before moving to challenge the control “Gladiator” video

- Once the challenger has exited the learning phase, allow CBO to change budget distribution between challenger and champion

Note: We’re continuously testing our assumptions and discussing testing procedures with large Facebook advertisers.

We look forward to hearing how you’re testing and sharing more of what we uncover soon.

Like Our A/B Testing? Check Out Our Whitepapers!

Please reach out to sales@ConsumerAcquisition.com if we can help with creative or media buying on Facebook and Google.

DOWNLOAD PDF VERSION